Deepfakes can wreak havoc on your business reputation and expose you to fraud, identity theft, hoaxes, ridicule, harassment, and more, according to the eSafety Commissioner.

So, what are deepfakes, and what can you do to combat them?

Deepfakes: digital puppetry

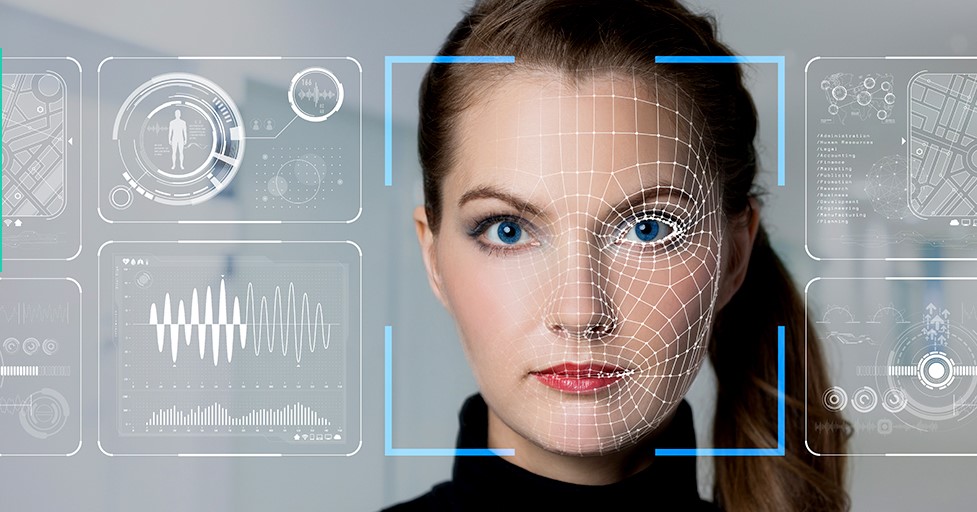

The term ‘deepfake’ was coined in 2017. It involves using artificial intelligence to replace a person’s likeness with another’s in a recorded video, audio clip, digital image, and even live video. Deepfakes draw on deep learning algorithms to create realistic-looking or sounding media. Tweepfakes – false yet plausible, Tweets — are also a concern.

Research shows that half of people are fooled by deepfakes, even though they think they can detect one. It’s not politicians who deepfake videos predominantly target but women, says the US Centre for International Governance Innovation.

Why are they a threat to businesses?

Deepfakes could harm your business reputation as well as infiltrate your processes for financial gain. They can exponentially escalate harmful rumour mills.

For example, cybercriminals could circulate a deep fake of a company’s CEO purporting to announce disastrous results, causing a shareholder backlash and a significant dip in stock value. Already fraudsters harness deepfakes to bypass biometric checks for online processes, such as banking, crypto, gaming, telecommunications, fintech, and insurance.

Here are some ways deepfakes could risk your business:

- Impersonate members of your C-Suite to authorise fraudulent financial transactions

- Impersonate an outside business leader, celebrity, or government official to demand funds

- Extortion or blackmail attempts from the cybercriminal threatening to release the fabricated audio/image/video file

- Spoof someone else’s identity, such as for a live online job interview

As deepfakes become more sophisticated and harder to detect, they could incite political tension, crimes, electoral interference, and even wars.

How to identify a deepfake

Look for these clues when checking for deepfakes:

- Is the focus unnaturally soft?

- Are parts of the person’s hair, skin, or face blurrier than the rest of the environment?

- The lighting might not look natural because it’s often that of the original clip

- Is it pixelated, has the metadata been manipulated, or is it not on an official communication channel?

- Are there glitches in the continuity, such as gaps in the speech or storyline?

- The subject doesn’t blink or blinks unnaturally

- The audio might not be well synched or poorly edited

- If it’s a live online interview, ask the subject to turn their face to the side for a profile view, as news publication ZDNET advises. AI is unlikely to be able to copy this.

But a review of research published about deepfake detection suggests that the technology which created the deepfake – deep learning – is also the best way to detect the forgery.

Minimising the risk of deepfakes to your business

A US report found that less than a third of businesses had a plan to deal with deepfakes. Consider boosting your company’s knowledge about how they occur and common targets, and establish some potential scenarios. This ABC Online News article could be a starting point for staff training.

To combat deepfakes, consider integrating authenticity technology, such as Sensity, a detection platform, or Operation Minerva. Professional services firm PwC suggests investing in two-factor authentication, blockchain-based verification, digital rights management, trust seals, or video encryption. Check out Facebook’s Deepfake Detection Challenge. If you suspect your business is a victim of a deepfake, report the online harm to the eSafety Commissioner.

How insurance can help

You do have some options for insurance coverage. Some policies, such as cyber insurance or crime insurance, may provide some relief for financial loss due to a deepfake incident. However, it depends on how and if such policies are triggered by a breach of your IT system or a cyberattack . Be sure to check with us for clarification.